Φ-Framework Report: Mistral AI

Organizational coherence analysis through the See / Spec / Split lens

I. The Company at a Glance

Mistral AI was founded in April 2023 by three French researchers in their early thirties: Arthur Mensch (CEO, ex-DeepMind), Timothée Lacroix (CTO, ex-Meta/Llama), and Guillaume Lample (Chief Scientist, ex-Meta). A fourth co-founder, Cédric O, handles government relations from his position as former French Secretary of State for Digital. The company grew from 35 employees in early 2024 to roughly 800 by early 2026.

Mistral builds foundation models (Mistral Small, Medium, Large, Codestral, Magistral), operates an API platform (La Plateforme), and runs a consumer chatbot (Le Chat). Revenue comes from pay-per-token API usage, enterprise subscriptions with data residency guarantees, and Le Chat Pro at $14.99/month. About 60% of revenue is from European clients.

The organizational design claim: a small, research-driven team that ships frontier models with a fraction of the compute and headcount of American labs. Mensch caps teams of no more than five people. The open-source strategy builds developer adoption; the commercial platform captures enterprise value.

II. Declared Organizational Structure

Mistral’s coordination architecture reflects its origin as a three-person research lab that scaled 20x in under two years:

| Principle | Mechanism | Φ Channel |

|---|---|---|

| Co-founder founding trio | CEO (business/strategy), CTO (engineering/infrastructure), Chief Scientist (research/models). Fourth co-founder handles government. | Φcomm (direct coordination) |

| Small teams | Mensch’s stated preference: teams of no more than five | Φcomm (face-to-face within team) |

| Open-source as adoption engine | Release open-weight models (Mistral Small, Ministral) to build developer base; monetize through proprietary platform and enterprise | Φsurface (community feedback as signal) |

| Forward-deployed engineers | Engineers embedded at enterprise clients (similar to Palantir model) to handle integration | Φcomm (high-touch client work) |

| Government liaison | Cédric O navigates EU regulation, French government partnerships, defense contracts | Φcomm (political relationship management) |

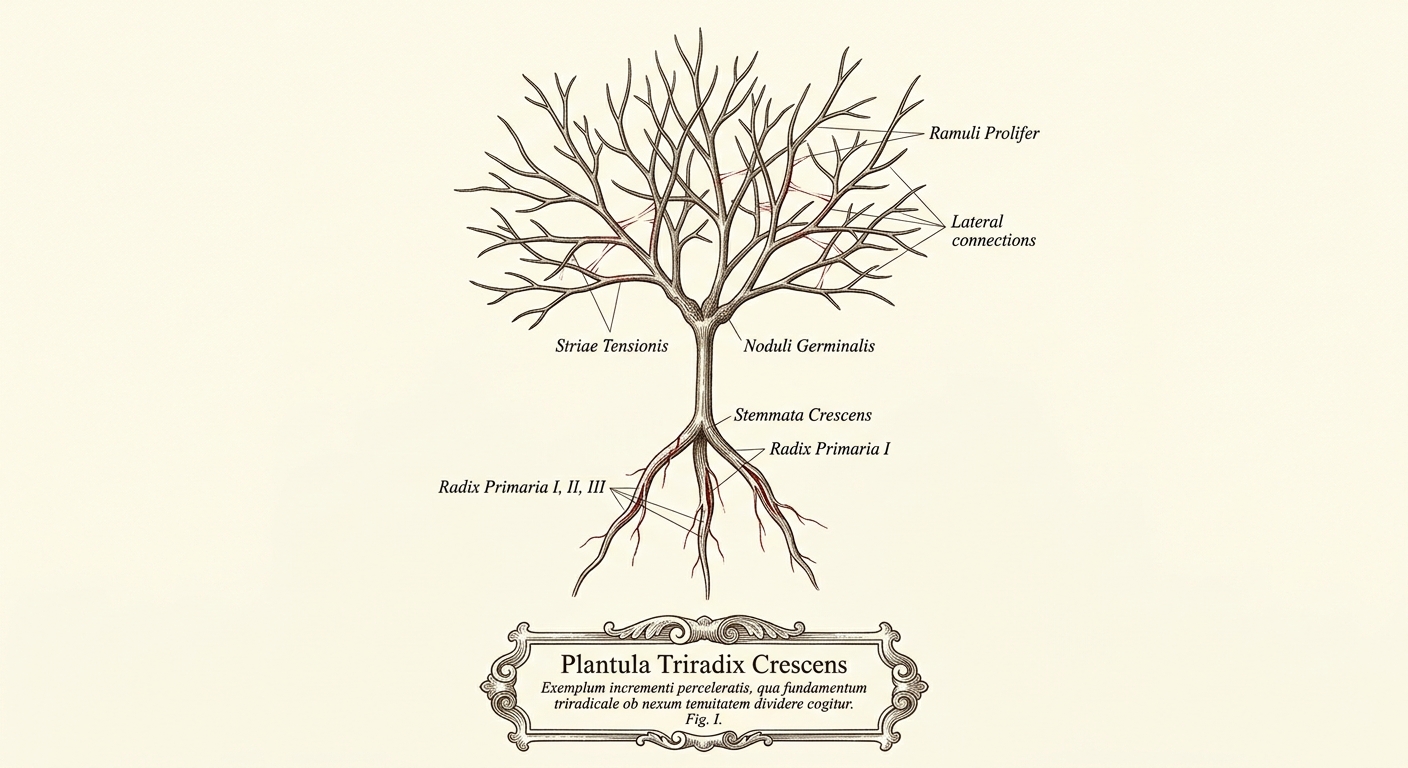

III. The Growth Fracture

Mistral went from 35 to 800 employees in under two years. At 35, the co-founders could hold the entire coordination graph in their heads. Every person knew every other person. Φcomm was sufficient because the communication cost scaled as N(N-1)/2 and N was tiny.

At 800, the graph is 500x denser. The co-founders cannot maintain direct relationships with everyone. The framework predicts (Prediction 5) that when an organization grows past the point where Φcomm alone can carry Rreq, it must develop either formal hierarchy, documented protocols, or shared substrates. Mistral is in the middle of this transition, and the transition is incomplete.

1. The Founding Trio Bottleneck

Three co-founders divide the org cleanly: research (Lample), engineering (Lacroix), business/strategy (Mensch), government (Cédric O). Cross-domain coordination between these four pillars runs through the founders’ personal communication. The same pattern as NVIDIA (star topology through Jensen), but with three stars instead of one, and at 1/50th the headcount where the strain is already visible in the growth rate.

2. The Φtacit Concentration

Mistral recruited heavily from two specific networks: Meta’s Llama team and the French grande école system. 55 of 99 authors on the June 2025 Magistral paper had French academic credentials. This creates high Φtacit among a core group that shares educational background, professional networks, and research conventions. But it also means coordination capacity is concentrated in a cultural in-group. As the org grows and diversifies geographically (London, Amsterdam, Palo Alto), the Φtacit that held the early team together fails to reach new hires from different networks.

3. The Open-Source/Commercial Tension

Mistral’s founding story centers on openness: releasing model weights for anyone to download and use. This generated enormous developer adoption (developers downloaded Mistral 7B 3.4M+ times) and established the brand. But revenue comes from the commercial platform, enterprise deployments, and Le Chat subscriptions. The tension: every model released open-weight reduces the moat around the commercial offering. The company navigates this by releasing smaller models open-weight and keeping frontier models behind the API. This dual strategy requires constant judgment calls about what to open and what to keep, and those calls route through the founding trio.

IV. Φ-Channel Analysis

| Channel | Domain | Evidence | Level |

|---|---|---|---|

| Φsurface | Model development | Benchmarks (HumanEval, MMLU), open-weight downloads as adoption signal, internal eval infrastructure. Research teams can see how models perform. | high |

| Φsurface | Customer/revenue | API usage metrics, Le Chat engagement, enterprise pipeline. Revenue grew from ~$16M (end 2024) to ~$400M ARR (Jan 2026), suggesting the team can see what sells. | moderate |

| Φsurface | Organization | No public evidence of internal coordination dashboards. At 800 people across 6 continents, cross-team visibility likely depends on Slack/meetings. | low |

| Φformal | Model release process | Rapid, frequent model releases (12+ models in 2025 alone) suggest a defined release pipeline. But the open/commercial split decision for each model appears to be ad hoc. | moderate |

| Φformal | Enterprise delivery | Forward-deployed engineers suggest a Palantir-style high-touch model. The company likely formalizes integration protocols per client (Azure, CMA CGM, French military). No evidence of standardized onboarding across customers. | low |

| Φtacit | Research coordination | Core team shares Meta/Llama and French grande école backgrounds. High shared context within the in-group. Magistral paper shows tight research collaboration. | high |

| Φtacit | Cross-team coordination | 20x headcount growth in 2 years dilutes the founding team’s tacit patterns. New hires from different networks lack access to the implicit coordination structure. | low |

| Φcomm | Founding trio decisions | Three co-founders in direct communication. Strategic decisions (open vs commercial, partnerships, model roadmap) resolved through founder dialogue. | high |

| Φcomm | Scaling coordination | At 800 people, the founding trio cannot participate in every cross-team decision. Coordination beyond the founders must rely on channels that have not yet been built. | misallocated |

V. Where Time Dies

| Queue | What Waits | Why It Waits | Severity |

|---|---|---|---|

| Open/commercial boundary | Each new model needs a release decision: open-weight or proprietary | No documented criteria for the decision. Each case routes through the founding trio. As model release cadence increases, the decision queue grows. Missing Φformal. | Critical |

| Enterprise onboarding | New enterprise clients need integration, data residency configuration, custom deployment | Forward-deployed engineers handle each client bespoke. No standardized integration playbook. Scales linearly with headcount, not with process. Missing R (rule set) in R/F/K. | Critical |

| Cross-team coordination | Decisions spanning research, engineering, product, and government (e.g., military partnership requirements affecting model architecture) | Four pillars coordinate through co-founder bandwidth. At 800 people, most cross-pillar requests wait for founder attention. Missing lateral Φsurface. | High |

| New-hire integration | Employees from outside the Meta/grande école network need access to implicit coordination patterns | Shared background and relationships encode the Φtacit that makes the core team efficient, not documentation. No onboarding pathway into the coordination culture. Missing Φformal for culture transfer. | High |

| Support and documentation | Developer and enterprise support requests | Users report support as “notoriously slow and unresponsive.” Fewer pre-built integrations and community guides than competitors. Missing F (failure detector) for support quality. | High |

VI. The Paradox of the Efficient Underdog

Mistral shipped its first model four months after founding. It reached frontier-competitive quality with 1,500 H100 GPUs when OpenAI used orders of magnitude more. It grew from $16M to $400M ARR in a single year. By any measure of output per person and output per dollar, Mistral is among the most efficient AI organizations ever built.

The efficiency comes from tight Φtacit within the founding core: a small group that shares research conventions, trusts each other’s judgment, and can coordinate through conversation alone. This is the Rnovel-rich regime where Φcomm is the right channel. Frontier AI research is genuinely equivocal (conflicting interpretations of what architecture, training approach, and product direction will work), and the founding trio resolves equivocality through direct dialogue faster than any protocol could.

The paradox: the same small-team, high-trust, Φcomm-heavy model that produces extraordinary research output breaks down for the Rroutine that grows with scale. Enterprise delivery, support, cross-team coordination, and organizational visibility are routine problems. They need Φformal and Φsurface. Mistral still lacks these because the founding trio model has worked so well for research that the org has not felt the cost of its absence elsewhere. $3B in funding and a $14B valuation relax selection pressure. The competitive pressure comes from model quality, where Mistral performs well, not from organizational efficiency, which scale has not yet tested.

VII. See / Spec / Split Applied

1. See the Queue

Mistral has strong Φsurface for model performance (benchmarks, evals) but weak Φsurface for organizational coordination. At 800 people across multiple offices and continents, the founding trio cannot see every cross-team dependency, every blocked enterprise deal, or every support request aging in queue.

First move: An internal dashboard showing enterprise pipeline status, cross-team dependency blockers, and support queue age. The founding trio should be able to see what is waiting on them and what can be resolved without them.

2. Spec the Handoff

The open/commercial boundary for each model release has no documented criteria. Enterprise onboarding has no standardized playbook. New-hire integration into the coordination culture has no pathway. These are all handoffs that currently depend on the founding trio’s judgment or the founding team’s Φtacit.

Second move: Write down the decision criteria for the open/commercial split (model size threshold, competitive positioning, customer commitments). Create a standardized enterprise integration playbook that forward-deployed engineers can execute without escalating to founders. Convert the founding team’s Φtacit into Φformal before growth dilutes it further.

3. Split the Traffic

The founding trio currently handles both Rnovel (what model to build next, which partnership to pursue, how to position against OpenAI) and Rroutine (enterprise deal approval, support escalations, cross-team priority conflicts). These require different channels.

Third move: Route Rnovel (research direction, strategic partnerships, regulatory positioning) through the founding trio’s Φcomm, where equivocality demands rich dialogue. Route Rroutine (enterprise onboarding, support, cross-team dependencies) through Φformal and Φsurface, freeing the founding trio for the decisions that genuinely require their judgment.

VIII. The Scaling Threshold

Mistral faces the classic startup transition: the coordination model that worked at 35 people is straining at 800 and will break at 2,000. The framework predicts (Prediction 5) three possible convergence paths:

Path 1: Add hierarchy. Create VP-level leaders between the founding trio and the teams. This is the conventional path. It works but introduces the management overhead that Mensch has explicitly said he wants to avoid (teams of no more than five).

Path 2: Add protocols. Write down the handoff specs, decision criteria, and coordination standards that the founding trio currently carries in their heads. GitLab’s 2,000-page handbook is the extreme version. This preserves flat structure but requires upfront Φformal investment.

Path 3: Add substrates. Build internal dashboards, automated routing, and self-serve tools that handle Rroutine without human coordination. This is the most aligned with Mistral’s engineering culture, but requires prioritizing internal tooling over customer-facing product.

The structural risk is that Mistral delays this transition because the research output keeps the external narrative positive. The competitive pressure is on model quality, not organizational scalability. By the time the organizational debt compounds visibly (enterprise churn from slow support, talent attrition from coordination friction, missed ship dates from cross-team blockage), the Φtacit of the founding team may already be too diluted to recover cheaply.

IX. Summary Assessment

| Dimension | Rating | Notes |

|---|---|---|

| Φsurface (models) | Strong | Benchmarks, evals, download counts. Research knows how models perform. |

| Φsurface (organization) | Weak | No evidence of cross-team visibility tooling. 800 people across 6 continents. |

| Φformal (research pipeline) | Moderate | 12+ model releases in 2025 suggests process exists. Open/commercial split is ad hoc. |

| Φformal (enterprise delivery) | Weak | Bespoke per client. No standardized playbook. Support is slow. |

| Φtacit (core team) | Strong | Shared Meta/grande école background. Tight research collaboration. Diluting with growth. |

| Φcomm (founding trio) | Strong | Three co-founders coordinate well. Bandwidth is the constraint, not quality. |

| Φcomm (scaling) | Strained | 800 people, 35-person coordination model. Transition incomplete. |

| Competitive resilience | Moderate | Output per dollar is exceptional. Compute gap with US labs is narrowing (ASML, NVIDIA, new data center) but still large. |

| Selection pressure | Relaxed | $3B funding, $14B valuation, European sovereignty narrative. Competitive pressure on model quality, not org efficiency. |

Four coordination channels, one parent:

Φsurface: substrates that make state visible without conversation.

Φformal: documented protocols that encode expectations without negotiation.

Φtacit: learned routines and institutional memory. Accumulates with time, destroyed by reorgs and turnover.

Φcomm: real-time human communication. Most expressive, most expensive, least scalable.

Φrule = Φformal + Φtacit: total protocol capacity.

SEE the queue: make work visible

SPEC the handoff: define “ready”

SPLIT the traffic: not everything needs the bottleneck

Applied recursively. Stop at the compression floor.